Eurographics 2014

Eurographics 2014

Abstract

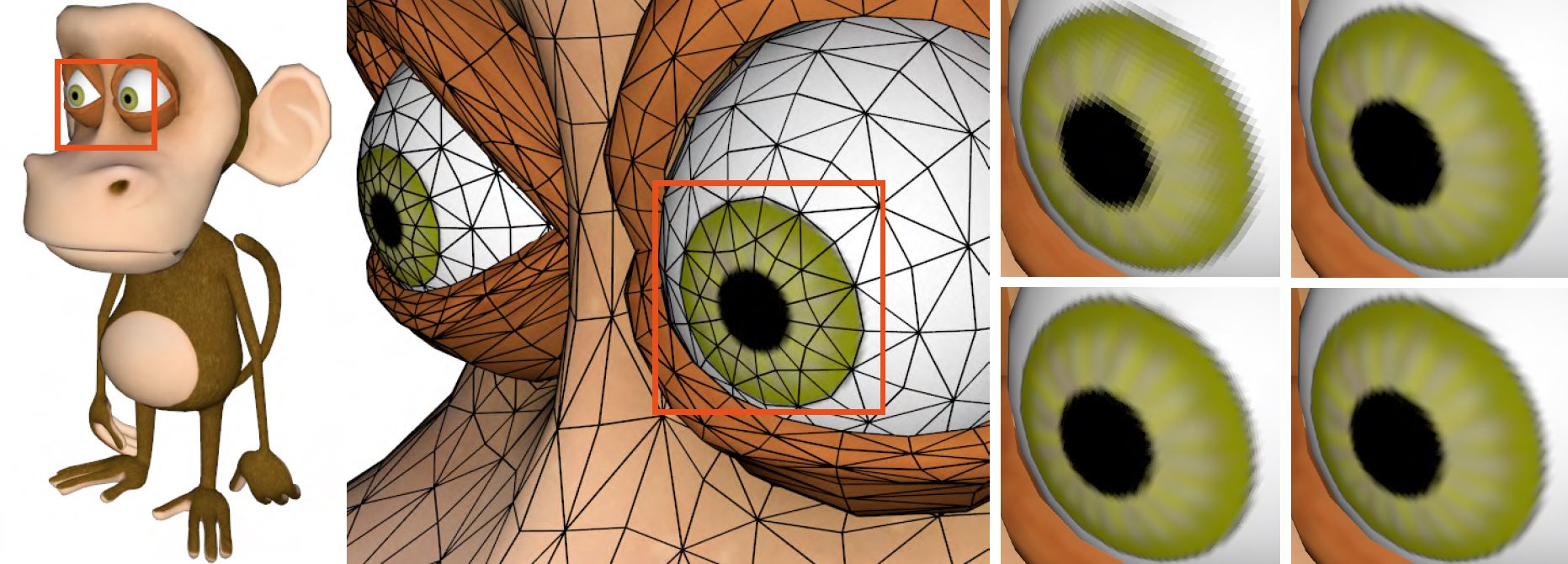

For a long time, GPUs have primarily been optimized to render more and more triangles with increasingly flexible shading. However, scene data itself has typically been generated on the CPU and then uploaded to GPU memory. Therefore, widely used techniques that generate geometry at render time on demand for the rendering of smooth and displaced surfaces were not applicable to interactive applications. As a result of recent advances in graphics hardware, in particular the GPU tessellation unit's ability to overcome this limitation, complex geometry can now be generated within the GPU's rendering pipeline on the fly. GPU hardware tessellation enables the generation of smooth parametric surfaces or application of displacement mapping in real-time applications. However, many well-established approaches in offline rendering are not directly transferable, due to the limited tessellation patterns or the parallel execution model of the tessellation stage. In this state of the art report, we provide an overview of recent work and challenges in this topic by summarizing, discussing and comparing methods for the rendering of smooth and highly detailed surfaces in real-time.