The Frankencamera API (with a namespace FCam) provides tools to control various components of a camera to facilitate complex photography operations.

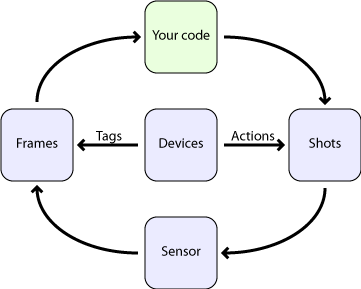

To use the Frankencamera API (FCam), you pass Shots to a Sensor which asynchronously returns Frames. A Shot completely specifies the capture and post-processing parameters of a single photograph, and a Frame contains the resulting image, along with supplemental statistics like a Histogram and SharpnessMap. You can tell Devices (like Lenses or Flashes) to schedule Actions (like firing the flash) to occur at some number of microseconds into a Shot. If timing is unimportant, you can also just tell Devices to do their thing directly from your code. In either case, Devices add tags to returned Frames (like the position of the Lens for that Shot). Tags are key-value pairs, where the key is a string like "focus" and the value is a TagValue, which can represent one of a number of types.

The four basic classes

Shot

A Shot is a bundle of parameters that completely describes the capture and post-processing of a single output Frame. A Shot specifies Sensor parameters such as

- gain,

- exposure time (in microseconds),

- total time (should be at least the exposure time, used to set frame rate),

- output resolution,

- format (raw or demosaicked),

- white balance (only relevant if format is demosaiced),

- memory location into which to place the Image data, and

- unique id (auto-generated on construction).

It also specifies the configuration of the fixed-function statistics generators by specifying

- over which regions Histograms should be computed, and

- over which region and at what resolution a Sharpness Map should be generated.

Sensor

After creation, a shot can be passed to a Sensor by calling

The sensor manages an imaging pipeline in a separate thread. A Shot is issued into the pipeline when it reaches the head of the queue of pending shots, and the Sensor is ready to begin configuring itself for the next Frame. Capturing a Shot just sticks it on the end of the queue of pending shots. Streaming a shot causes the sensor to capture a copy of that shot whenever the queue becomes empty. You can therefore stream one shot while capturing others - the captured shots will have higher priority.

If you wish to change the parameters of a streaming Shot, just alter it and call stream again with the updated Shot. This incurs very little overhead and will not slow down streaming (provided you don't change output resolution).

Sensors may also capture or stream STL vectors of Shots, or bursts. Capturing a burst enqueues those shots in the order given, and is useful, for example, to capture a full high-dynamic range stack in the minimal amount of time, or to rotate through a variety of sensor settings at video rate.

Frame

On the output side, the Sensor produces Frames, retrieved via the getFrame method. This method is the only blocking call in the core API.

A frame contains

- Image data,

- the output of the statistics generators,

- the precise time the exposure began and ended,

- the actual parameters used in its capture (Shot), and

- the requested parameters in the form of a copy of the Shot used to generate it.

- Tags placed on it by devices, in its tags dictionary.

If the Sensor was unable to achieve the requested parameters (for example if the requested frame time was shorter than the requested exposure time), then the actual parameters will reflect the modification made by the system.

Frames can be identified by the id field of their Shot.

The API guarantees that one Frame comes out per Shot requested. Frames are never duplicated or dropped entirely. If Image data is lost or corrupted due to hardware error, a Frame is still returned (possibly with statistics intact), with its Image marked as invalid.

Device

Cameras are much more than an image sensor. They also include a Lens, a Flash, and other assorted Devices. In FCam each Device is represented by an object with methods for performing its various functions. Each device may additionally define a set of Actions which are used to synchronize these functions to exposure, and a set of tags representing the metadata attached to returned frames. While the exact list of Devices is platform-specific, FCam includes abstract base classes that specify the interfaces to the Lens and the Flash.

Lens

- The lens is a Device subclass that represents the main lens. It can be asked to initiate a change to any of its three parameters:

- focus (measured in diopters),

- focal length (zooming factor), and

- aperture.

- Each of these calls returns immediately, and the lens starts moving in the background. Each call has an optional second argument that specifies the speed with which the action should occur. Additionally, each parameter can be queried to see if it is currently changing, what its bounds are, and its current value.

Flash

- The Flash is another Device subclass that has a single method that tells it to fire with a specified brightness and duration. It also has methods to query bounds on brightness and duration.

Action

- In many applications, the timing of device actions must be precisely coordinated with the image sensor to create a successful photograph. For example, the timing of flash firing in a conventional second-curtain sync flash photograph must be accurate to within a millisecond.

- To facilitate this, each device may define a set of Actions as nested classes. They include

- a start time relative to the beginning of image exposure,

- a method that will be called to initiate the action,

- and a latency field that indicates how long the delay is between the method call and the action actually beginning.

- Any Device with predictable latency can thus have its actions precisely scheduled. The Lens defines three actions; one to initiate a change in each lens parameter. The Flash defines a single action to fire it. Each Shot contains a set of actions (a standard STL set) scheduled to occur during the corresponding exposure.

Tags

- Devices may also gather useful information or metadata during an exposure, which they may attach to returned Frames by including them in the tags dictionary. Frames are tagged after they leave the pipeline, so this typically requires Devices to keep a short history of their recent state, and correlate the timestamps on the Frame they are tagging with that history.

- The Lens and Flash tag each Frame with the state they were in during the exposure. This makes writing an autofocus algorithm straightforward; the focus position of the Lens is known for each returned Frame. Other appropriate uses of tags include sensor fusion and inclusion of fixed-function imaging blocks.

FCam Platforms

Each platform defines its own versions of Sensor, Lens, and Flash, and optionally may define its own Shot and Frame. These live in a namespace named after the platform. You can thus refer to them as FCam::MyPlatform::Shot.

The F2

The N900

Other Useful Features

Events

- Some devices generate asynchronous Events. These include user input Events like the feedback from a manual zoom Lens or phidgets device, as well as asynchronous error conditions like Lens removal. We maintain an Event queue for the application to retrieve and handle these Events. To retrieve an Event from the queue, use getNextEvent.

Autofocus, metering, and white-balance

- FCam is sufficiently low level for you to write your own high-performance metering and autofocus functions. For convenience, we include an AutoFocus helper object, and autoExpose and autoWhiteBalance helper functions.

File I/O and Post-Processing

- To synchronously save files to disk, see saveDNG, saveDump, and saveJPEG. The latter will demosaic, white balance, and gamma correct your image if necessary. To asynchronously save files to disk (in a background thread), see AsyncFileWriter.

- You can also load files you have saved with loadDNG and loadJPEG. Loading or saving in either format will preserve all tags devices have placed on the frame.

- We encourage you to use your favorite image processing library for more complicated operations. FCam Image objects can act as weak references to the memory of another library's image object, to support this interopability.

Examples

For example code, including Makefiles, refer to the examples bundle that comes with this document. It includes the following programs.

- example3: Capture a photograph during which the focus ramps from near to far. Demonstrates use of a Device, a Lens Action, and a Tag.

- example4: Stream frames and auto expose. Demonstrates streaming and auto-exposure.

- example5: Stream frames and focus. Demonstrates streaming and auto-focus.

- example6: Make a noise synchronized to the start of the exposure. Demonstrates making a custom Device, and synchronizing that device's Action with exposure.

- example7: A full camera application using Qt, with metering, focus, drawing viewfinder frames, drawing on top of viewfinder frames with OpenGL, and saving out JPEGs and DNGs asynchronously with the AsyncFileWriter.

1.7.1

1.7.1